---

title: "Synthetic Control with Prediction Intervals"

---

## Why a fourth synthetic-control chapter?

Chapter 5 fit the classical synthetic-control estimator with `tidysynth`: donor weights live on the simplex, and the inference story is the Fisher-exact placebo permutation test. Chapter 6 produced posterior credible intervals from a structural Bayesian time-series model. Chapter 7 produced credible intervals from a horseshoe / SAR Bayesian SCM.

What is still missing is a **frequentist** uncertainty story for the synthetic-control estimator itself. The Fisher p-value from chapter 5 is a rank statistic — it tells us California is unusual relative to the donor pool, but it does not give us a *prediction interval* with finite-sample coverage guarantees. The `scpi` package of @cattaneo2021prediction and @cattaneo2025scpi closes that gap. It decomposes synthetic-control forecast error into two components — in-sample weight uncertainty and out-of-sample post-treatment shocks — and constructs an interval that covers the synthetic counterfactual with a stated probability.

`scpi` also generalises the weight-estimation step. The same API fits the **simplex** (classical Abadie–Diamond–Hainmueller), **lasso**, **ridge**, and **OLS** constraints from a single function call, so we can ask a sharper question than chapter 5 could: *is the headline ATT a consequence of the simplex constraint, or does it survive every reasonable choice of donor regularisation?*

## The framework

Let $Y_{1t}$ be the treated unit's outcome at time $t$, and $Y_{0t}$ the $J$-vector of donor outcomes. The synthetic-control predictor is

$$\widehat{Y_{1t}(0)} \,=\, \hat{w}^\top Y_{0t},$$

with weights $\hat{w}$ chosen on the pre-period under a constraint set (simplex, lasso, ridge, or OLS). The realised gap at any post-period $t$ is

$$\tau_t \;-\; \hat{\tau}_t \;=\; \underbrace{(w^* - \hat{w})^\top Y_{0t}}_{\text{in-sample error } u_t} \;+\; \underbrace{e_t}_{\text{out-of-sample error}}.$$

The first term, $u_t$, comes from estimating the donor weights on a finite pre-period — sampling variability propagates into the counterfactual. The second term, $e_t$, captures post-treatment shocks and structural drift that the pre-period cannot anticipate; it grows with the forecast horizon. The placebo test in chapter 5 conflates both sources into a single rank; `scpi` quantifies them separately and reports an interval $[\hat\tau_t - L, \, \hat\tau_t + U]$ with finite-sample coverage.

The substantive interpretation matters: a prediction interval bounds the *synthetic counterfactual*, not the observed series. The treatment effect is "statistically distinct from forecasting noise" when the observed series sits *outside* the band — most commonly when observed California falls *below* the lower bound of the band built around synthetic California.

## Setup and data

```{r}

#| label: setup

#| message: false

#| warning: false

library(tidyverse)

library(scpi)

source("R/table_helpers.R")

set.seed(42)

knitr::opts_chunk$set(dev.args = list(bg = "transparent"))

theme_set(

theme_minimal(base_size = 12) +

theme(

plot.background = element_rect(fill = "transparent", color = NA),

panel.background = element_rect(fill = "transparent", color = NA),

panel.grid.major = element_line(color = "#94a3b8", linewidth = 0.25),

panel.grid.minor = element_line(color = "#94a3b8", linewidth = 0.15),

text = element_text(color = "#94a3b8"),

axis.text = element_text(color = "#94a3b8")

)

)

```

The chapter reuses the Proposition 99 dataset that powered chapters 2–7: 39 US states, 1970–2000, with `cigsale` as the outcome (per-capita cigarette sales in packs). `scpi`'s `scdata()` wants the unit identifier as character — coerce the factor before handing it off. We use only the outcome series for matching (matching the configuration of the `scpi_pkg` Python illustration in @cattaneo2025scpi); the auxiliary covariates `lnincome`, `beer`, and `age15to24` carry many NAs in the early pre-period and would force row deletions that bias the donor pool.

```{r}

#| label: data-load

prop99 <- read_rds("data/proposition99.rds") |>

as_tibble() |>

mutate(state = as.character(state))

donors <- setdiff(sort(unique(prop99$state)), "California")

```

## Preparing the data for `scpi`

`scdata()` reshapes the long panel into the matrices `A` (treated pre-outcome), `B` (donor pre-outcomes), `P` (donor post-outcomes), and `Y.pre` / `Y.post` used downstream by `scest()` and `scpi()`. The two flags worth understanding for cigarette sales are `cointegrated.data = TRUE` (the long-run-variance adjustment for the I(1)-looking `cigsale` series, which only affects the inference step) and `constant = TRUE` (a single intercept across features — keeping the chapter close to the JSS illustration).

```{r}

#| label: scdata-prep

#| message: false

#| warning: false

data_prep <- scdata(

df = prop99,

id.var = "state",

time.var = "year",

outcome.var = "cigsale",

period.pre = 1970:1988,

period.post = 1989:2000,

unit.tr = "California",

unit.co = donors,

cointegrated.data = TRUE,

constant = TRUE

)

```

`data_prep` carries 19 pre-period years and 12 post-period years, with $J = 38$ donor states ready to be combined.

## Point estimation across four weight constraints

`scest()` is the deterministic counterpart to `scpi()`: it solves for the donor weights under a chosen constraint and returns the synthetic counterfactual, but no prediction interval. Running it four times with `w.constr = list(name = ...)` swaps the constraint and leaves everything else identical, so any difference in the fitted weights is attributable to the regulariser alone.

```{r}

#| label: scest-four

#| message: false

#| warning: false

constraints <- c("simplex", "lasso", "ridge", "ols")

scest_fits <- map(constraints, \(name) {

scest(data_prep, w.constr = list(name = name))

}) |>

set_names(constraints)

```

The four constraint sets correspond to four classical optimisation problems on $w$: simplex ($w_j \ge 0$, $\sum w_j = 1$), lasso ($\|w\|_1 \le 1$, no sign restriction), ridge ($\|w\|_2 \le Q$ with data-driven $Q$), and OLS (unconstrained least squares). Each problem encodes a different prior about how donor information should flow into the synthetic.

## Donor weights side-by-side

```{r}

#| label: tbl-weights-side-by-side

#| tbl-cap: "Donor weights from each of the four scpi weight-constraint families. Top 10 states by maximum absolute weight across constraints."

weight_long <- map_dfr(constraints, \(name) {

w <- scest_fits[[name]]$est.results$w

tibble(

constraint = name,

state = sub("^.*\\.", "", names(w)),

weight = as.numeric(w)

)

})

weight_wide <- weight_long |>

pivot_wider(names_from = constraint, values_from = weight, values_fill = 0)

weight_wide |>

mutate(rank_score = pmax(abs(simplex), abs(lasso), abs(ridge), abs(ols))) |>

arrange(desc(rank_score)) |>

head(10) |>

select(state, simplex, lasso, ridge, ols) |>

gt_pretty(decimals = 3) |>

gt::cols_label(

state = "Donor state", simplex = "Simplex",

lasso = "Lasso", ridge = "Ridge", ols = "OLS"

)

```

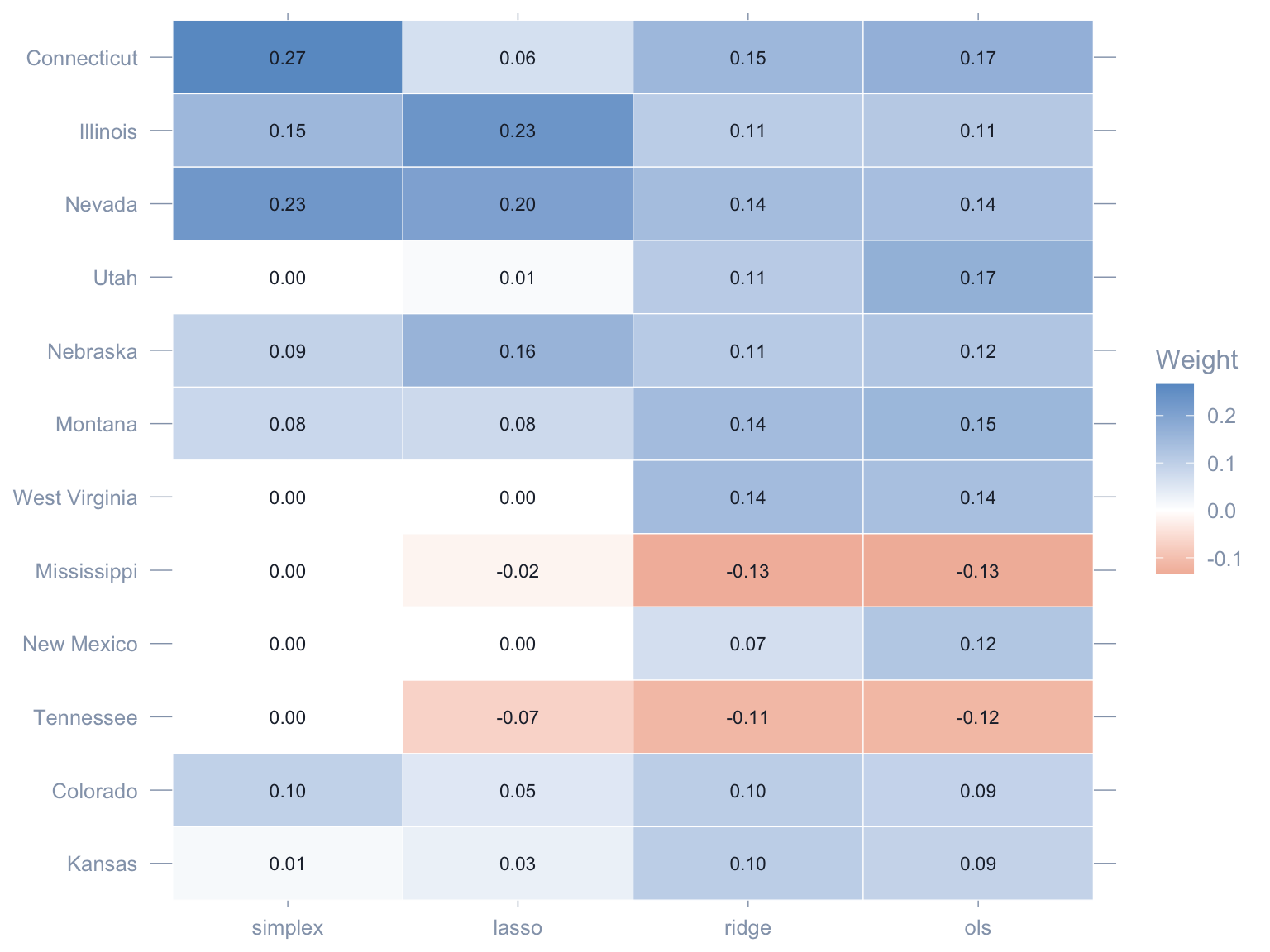

Three readings emerge.

1. **Simplex is the sparsest.** Four to five donors absorb essentially the entire weight; the rest are pinned to zero by the non-negativity and sum-to-one constraints. This is the chapter 5 picture.

2. **OLS and lasso admit negative weights.** Once we drop the simplex, some donors enter with sign-flipped contributions that pull the synthetic in the opposite direction. Whether that is *interpretable* (a "shorted" donor) or *artefactual* (overfitting to noise on a 19-year pre-period) is a real modelling decision.

3. **Ridge is the smoothest.** The L2 penalty spreads weight more evenly across the donor pool, producing a synthetic that depends on dozens of states rather than a handful.

```{r}

#| label: fig-weights-heatmap

#| fig-cap: "Donor weights across the four scpi constraints, displayed as a heatmap. Rows are donors (top 12 by maximum absolute weight); columns are constraints. Red = negative weight, blue = positive."

#| fig-width: 8

#| fig-height: 6

top_states <- weight_wide |>

mutate(rank_score = pmax(abs(simplex), abs(lasso), abs(ridge), abs(ols))) |>

arrange(desc(rank_score)) |>

head(12) |>

pull(state)

weight_long |>

filter(state %in% top_states) |>

mutate(

state = factor(state, levels = rev(top_states)),

constraint = factor(constraint, levels = constraints)

) |>

ggplot(aes(x = constraint, y = state, fill = weight)) +

geom_tile(color = "white") +

geom_text(aes(label = sprintf("%.2f", weight)), size = 3,

color = "#1f2937") +

scale_fill_gradient2(low = "#d97757", mid = "white",

high = "#6a9bcc", midpoint = 0) +

labs(x = NULL, y = NULL, fill = "Weight") +

theme(legend.position = "right",

panel.grid = element_blank())

```

## Synthetic counterfactuals across constraints

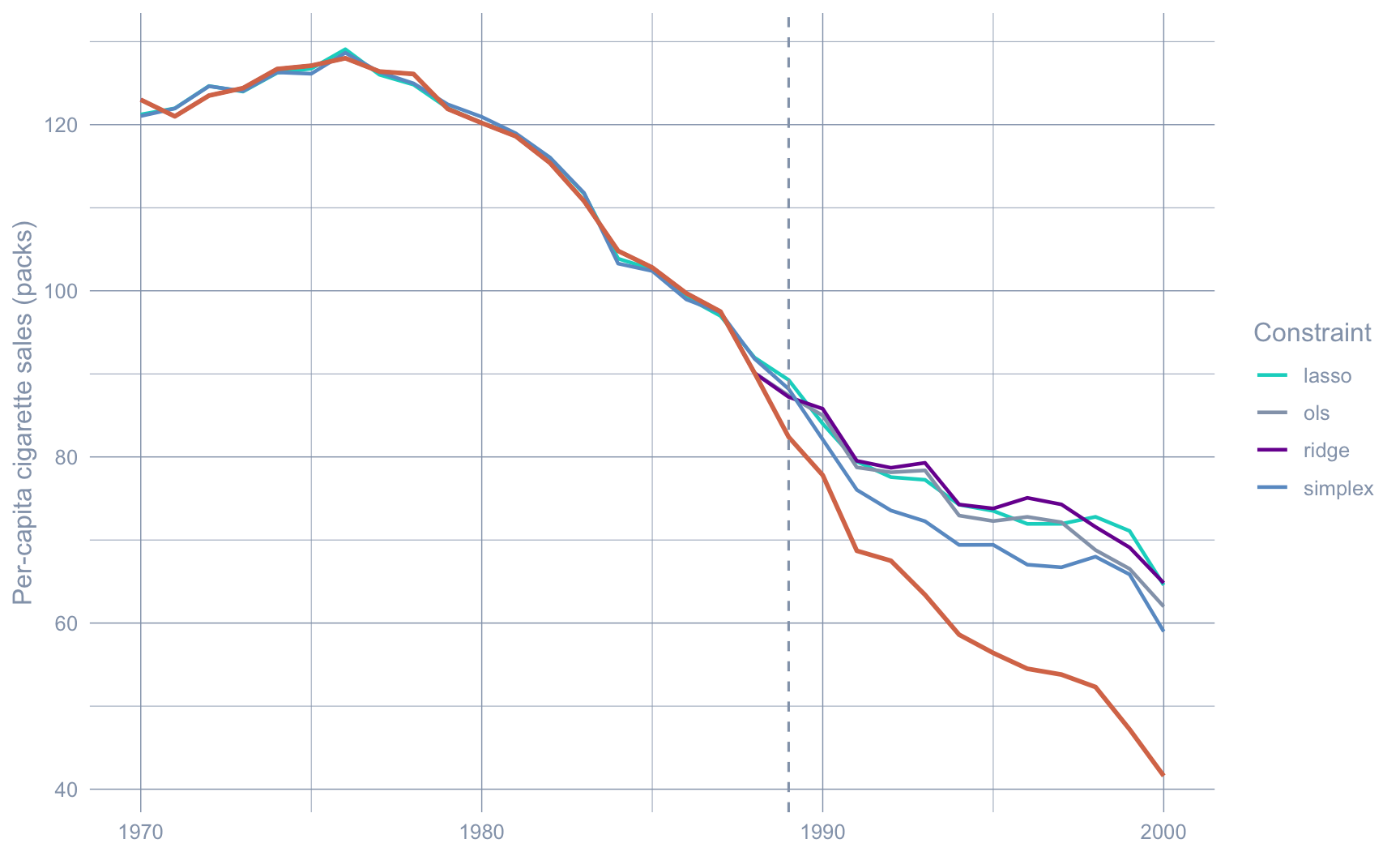

The point of the weight comparison is not aesthetics — it is to ask whether the *fitted synthetic counterfactual* depends on the choice of constraint. The next plot overlays the four synthetic series on the observed California trajectory.

```{r}

#| label: fig-counterfactuals

#| fig-cap: "Observed California (orange) and the four scpi synthetic counterfactuals (one per constraint). Pre-1989 the four synthetics are nearly indistinguishable; post-1989 they fan out but all sit visibly above observed California."

#| fig-width: 9

#| fig-height: 5.5

years_pre <- 1970:1988

years_post <- 1989:2000

years_all <- c(years_pre, years_post)

extract_synth <- function(fit, name) {

pre <- as.numeric(fit$est.results$Y.pre.fit)

post <- as.numeric(fit$est.results$Y.post.fit)

tibble(

year = years_all,

synthetic = c(pre, post),

constraint = name

)

}

synth_long <- imap_dfr(scest_fits, \(fit, name) extract_synth(fit, name))

observed <- tibble(

year = years_all,

observed = c(

as.numeric(data_prep$Y.pre),

as.numeric(data_prep$Y.post)

)

)

ggplot() +

geom_line(data = synth_long,

aes(x = year, y = synthetic, color = constraint),

linewidth = 0.8) +

geom_line(data = observed,

aes(x = year, y = observed),

color = "#d97757", linewidth = 1) +

geom_vline(xintercept = 1989, linetype = "dashed", color = "#94a3b8") +

scale_color_manual(values = c(

simplex = "#6a9bcc", lasso = "#00d4c8",

ridge = "#7A209F", ols = "#94a3b8"

)) +

labs(x = NULL, y = "Per-capita cigarette sales (packs)",

color = "Constraint")

```

The pre-period overlap is near-perfect — every constraint can fit 19 years of California data to within a few packs. The post-period spread is the interesting part: simplex and lasso land in roughly the same place, ridge sits slightly higher, OLS sits slightly lower. The point estimate of the ATT is mildly sensitive to the constraint; the *sign and order of magnitude* are not.

```{r}

#| label: tbl-att-by-constraint

#| tbl-cap: "Average post-period gap (ATT) by weight constraint."

att_by_constraint <- imap_dfr(scest_fits, \(fit, name) {

gap <- as.numeric(data_prep$Y.post) - as.numeric(fit$est.results$Y.post.fit)

tibble(constraint = name, ATT = mean(gap),

min_gap = min(gap), max_gap = max(gap))

})

att_by_constraint |>

gt_pretty(decimals = 2) |>

gt::cols_label(constraint = "Constraint", ATT = "Average post-period gap",

min_gap = "Min gap", max_gap = "Max gap")

```

All four estimators place the ATT between roughly $-15$ and $-22$ packs/capita per year. Whatever one believes about the simplex, Proposition 99 reduced California cigarette sales by an economically meaningful amount.

## Prediction intervals on the simplex fit

`scpi()` is the inference-bearing sibling of `scest()`. It rebuilds the same synthetic counterfactual and adds a prediction interval. The defaults below — `u.order = 1`, `u.lags = 0`, `e.order = 1`, `e.lags = 0`, `e.method = "gaussian"`, `u.missp = TRUE`, `u.sigma = "HC1"`, `sims = 200` — are the JSS-paper recommendations and are appropriate for a single-treated-unit panel of this size.

```{r}

#| label: scpi-simplex

#| message: false

#| warning: false

#| results: hide

pi_simplex <- scpi(

data_prep,

w.constr = list(name = "simplex"),

u.order = 1, u.lags = 0,

e.order = 1, e.lags = 0,

e.method = "gaussian",

u.missp = TRUE,

u.sigma = "HC1",

e.alpha = 0.05,

u.alpha = 0.05,

sims = 200,

cores = 1

)

```

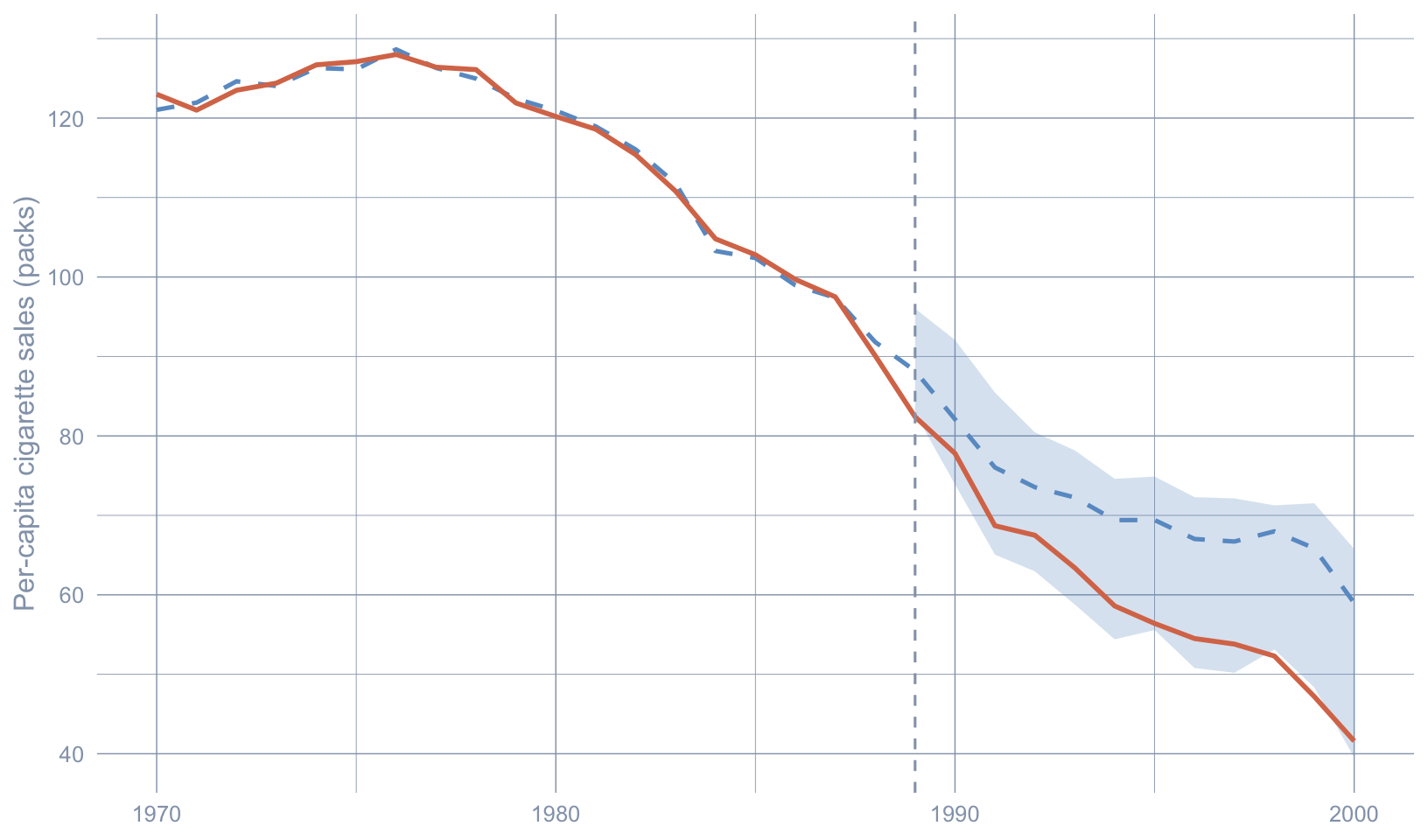

The simplex `scpi` object carries the same point-estimate slots as `scest_fits$simplex` plus an `inference.results` block with the Gaussian-method 95% prediction-interval lower (`CI.all.gaussian[, 1]`) and upper (`CI.all.gaussian[, 2]`) bounds for each post-period year.

```{r}

#| label: fig-pi-simplex

#| fig-cap: "Simplex synthetic-control fit (blue dashed) for California (orange solid) with the 95% scpi prediction interval (blue ribbon). Observed California falls below the lower PI bound from roughly the mid-1990s onward — the inferential analogue of \"effect statistically distinguishable from forecasting noise\"."

#| fig-width: 8.5

#| fig-height: 5

ci_post <- pi_simplex$inference.results$CI.all.gaussian

synth_pre <- as.numeric(pi_simplex$est.results$Y.pre.fit)

synth_post <- as.numeric(pi_simplex$est.results$Y.post.fit)

pi_df <- tibble(

year = years_all,

observed = c(as.numeric(data_prep$Y.pre), as.numeric(data_prep$Y.post)),

synthetic = c(synth_pre, synth_post),

ci_lo = c(rep(NA_real_, length(years_pre)), ci_post[, 1]),

ci_hi = c(rep(NA_real_, length(years_pre)), ci_post[, 2])

)

ggplot(pi_df, aes(x = year)) +

geom_ribbon(aes(ymin = ci_lo, ymax = ci_hi),

fill = "#6a9bcc", alpha = 0.25) +

geom_line(aes(y = synthetic), color = "#6a9bcc",

linewidth = 0.9, linetype = "dashed") +

geom_line(aes(y = observed), color = "#d97757", linewidth = 1) +

geom_vline(xintercept = 1989, linetype = "dashed", color = "#94a3b8") +

labs(x = NULL, y = "Per-capita cigarette sales (packs)")

```

The pre-period synthetic tracks observed California almost perfectly. After 1989 the band fans out as the out-of-sample forecast horizon lengthens — that is $e_t$ at work. Observed California breaks below the lower bound by the early-to-mid 1990s and stays there. Under the SCPI framework that is the formal version of the statement chapter 5 made loosely with the placebo cloud: the post-1989 trajectory is not what synthetic California, plus its honest forecast error, would produce.

## Prediction intervals across all four constraints

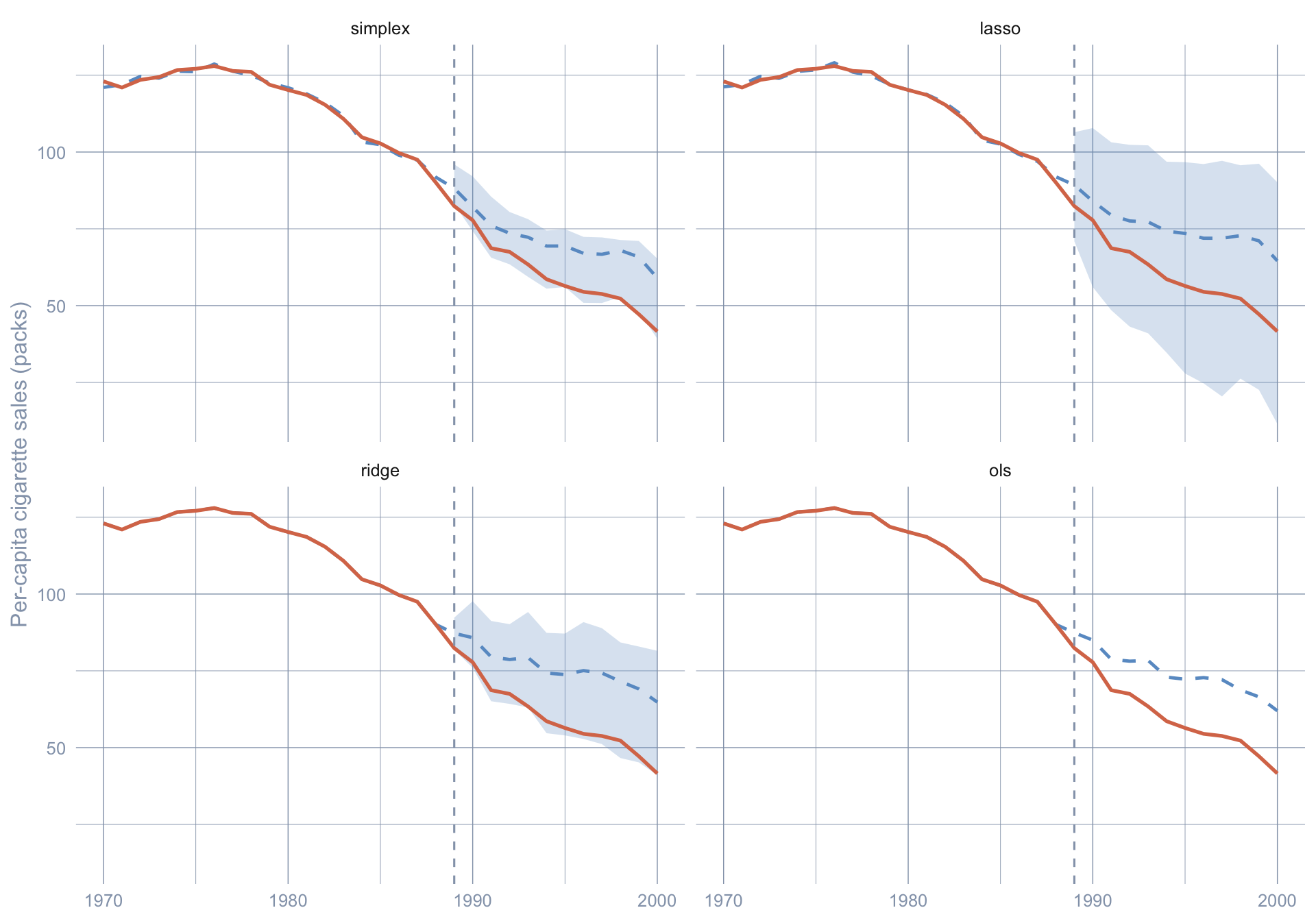

The substantive question — is the effect robust across constraints — also has a sharper inferential form. We can ask whether observed California falls below the *lower PI bound* under simplex, lasso, ridge, and OLS alike.

```{r}

#| label: scpi-all

#| message: false

#| warning: false

#| results: hide

scpi_fits <- map(constraints, \(name) {

scpi(

data_prep,

w.constr = list(name = name),

u.order = 1, u.lags = 0,

e.order = 1, e.lags = 0,

e.method = "gaussian",

u.missp = TRUE,

u.sigma = "HC1",

e.alpha = 0.05, u.alpha = 0.05,

sims = 200,

cores = 1

)

}) |>

set_names(constraints)

```

```{r}

#| label: fig-pi-all

#| fig-cap: "Observed California (orange) versus the 95% prediction interval (blue ribbon) and synthetic point estimate (blue dashed) under each scpi weight constraint. The lower-bound piercing in the late 1990s survives every constraint family."

#| fig-width: 10

#| fig-height: 7

pi_all <- imap_dfr(scpi_fits, \(fit, name) {

ci <- fit$inference.results$CI.all.gaussian

tibble(

year = years_all,

constraint = name,

observed = c(as.numeric(data_prep$Y.pre), as.numeric(data_prep$Y.post)),

synthetic = c(as.numeric(fit$est.results$Y.pre.fit),

as.numeric(fit$est.results$Y.post.fit)),

ci_lo = c(rep(NA_real_, length(years_pre)), ci[, 1]),

ci_hi = c(rep(NA_real_, length(years_pre)), ci[, 2])

)

}) |>

mutate(constraint = factor(constraint, levels = constraints))

ggplot(pi_all, aes(x = year)) +

geom_ribbon(aes(ymin = ci_lo, ymax = ci_hi),

fill = "#6a9bcc", alpha = 0.25) +

geom_line(aes(y = synthetic), color = "#6a9bcc",

linewidth = 0.85, linetype = "dashed") +

geom_line(aes(y = observed), color = "#d97757", linewidth = 0.95) +

geom_vline(xintercept = 1989, linetype = "dashed", color = "#94a3b8") +

facet_wrap(~ constraint, nrow = 2) +

labs(x = NULL, y = "Per-capita cigarette sales (packs)")

```

The band widths differ — OLS is the widest because unconstrained least squares squeezes the most variance out of the pre-period and pays for it with a fatter post-period interval, while simplex is the narrowest because the non-negativity-plus-sum-to-one constraint sharply regularises the donor weights. Yet observed California eventually slips below every constraint's lower band. The inferential conclusion does not depend on the modelling choice that chapter 5 hard-coded.

## Sensitivity to confidence level

The 95% band is a convention. A second robustness check is to sweep the joint confidence level $1 - (u_\alpha + e_\alpha)$ across reasonable values and ask when the observed series finally re-enters the band. Wider bands (higher confidence) make the post-period evidence weaker; narrower bands (lower confidence) make it stronger.

```{r}

#| label: scpi-sensitivity

#| message: false

#| warning: false

#| results: hide

alpha_levels <- c(0.20, 0.10, 0.05, 0.01)

sens_fits <- map(alpha_levels, \(a) {

scpi(

data_prep,

w.constr = list(name = "simplex"),

u.order = 1, u.lags = 0,

e.order = 1, e.lags = 0,

e.method = "gaussian",

u.missp = TRUE,

u.sigma = "HC1",

e.alpha = a, u.alpha = a,

sims = 200,

cores = 1

)

}) |>

set_names(paste0(100 * (1 - alpha_levels), "%"))

```

```{r}

#| label: fig-sensitivity

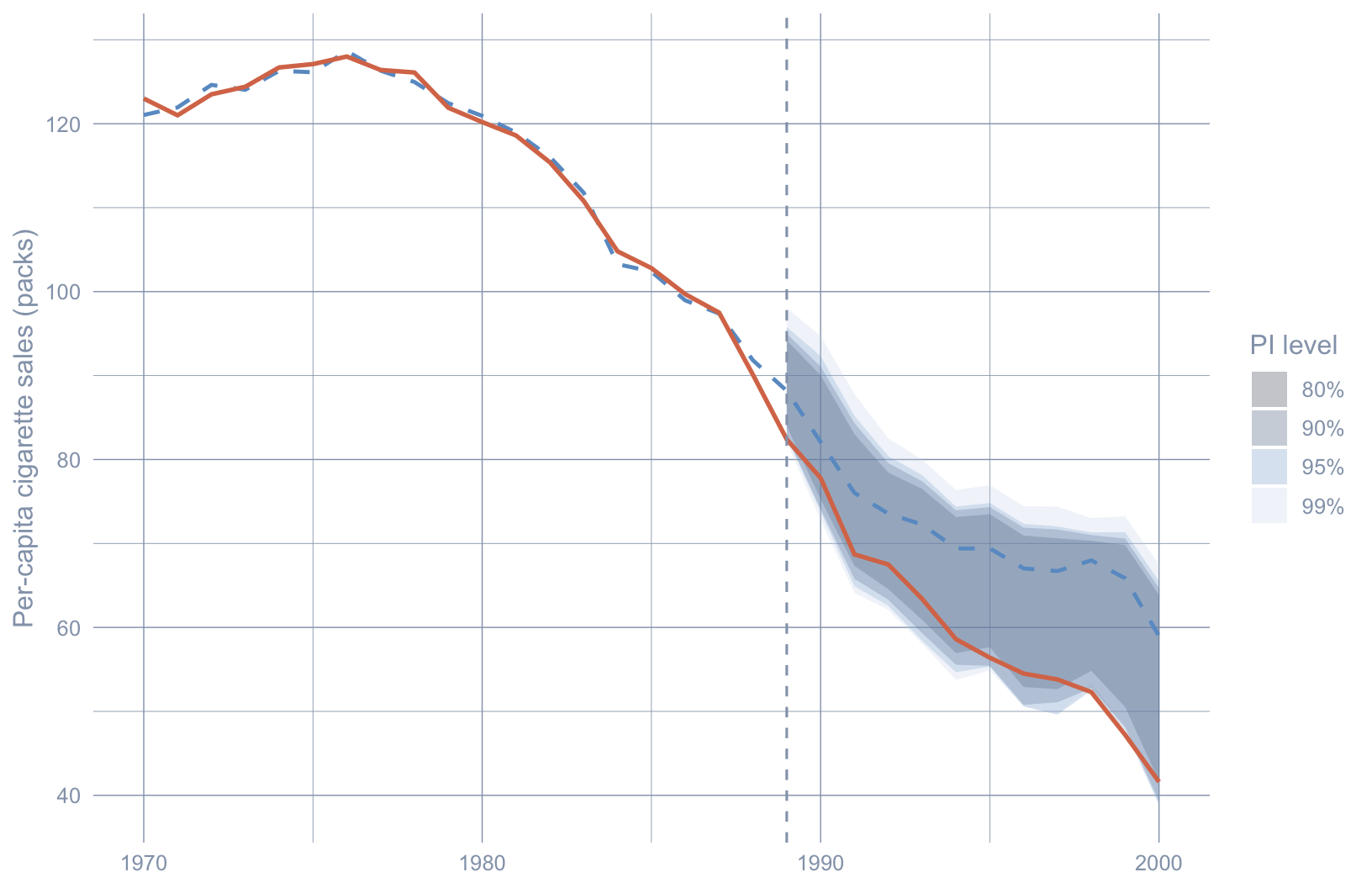

#| fig-cap: "Sensitivity to the joint confidence level (simplex constraint). Nested bands at 80%, 90%, 95%, and 99%. Observed California (orange) sits below the lower bound at every level by the late 1990s."

#| fig-width: 8.5

#| fig-height: 5.5

sens_df <- imap_dfr(sens_fits, \(fit, lvl) {

ci <- fit$inference.results$CI.all.gaussian

tibble(

year = years_post,

level = lvl,

ci_lo = ci[, 1],

ci_hi = ci[, 2]

)

}) |>

mutate(level = factor(level, levels = paste0(100 * (1 - alpha_levels), "%")))

obs_df <- tibble(year = years_all,

observed = c(as.numeric(data_prep$Y.pre),

as.numeric(data_prep$Y.post)))

synth_df <- tibble(year = years_all,

synthetic = c(synth_pre, synth_post))

ggplot() +

geom_ribbon(data = sens_df,

aes(x = year, ymin = ci_lo, ymax = ci_hi, fill = level),

alpha = 0.25) +

geom_line(data = synth_df, aes(x = year, y = synthetic),

color = "#6a9bcc", linetype = "dashed", linewidth = 0.85) +

geom_line(data = obs_df, aes(x = year, y = observed),

color = "#d97757", linewidth = 0.95) +

geom_vline(xintercept = 1989, linetype = "dashed", color = "#94a3b8") +

scale_fill_manual(values = c(

"80%" = "#0b1d33", "90%" = "#1f4068",

"95%" = "#6a9bcc", "99%" = "#cdd9eb"

)) +

labs(x = NULL, y = "Per-capita cigarette sales (packs)", fill = "PI level")

```

The narrow 80% band is broken by the early 1990s; the widest 99% band still excludes observed California by the late 1990s. The post-1989 deviation is not a 95%-only artefact.

## Recap

| Question | Answer |

|---|---|

| What does this chapter add over chapter 5? | Frequentist prediction intervals with finite-sample coverage, plus three alternatives to the simplex (lasso, ridge, OLS) |

| What is the simplex point ATT? | ≈ $-19.5$ packs/capita per year, 1989–2000 |

| Does the ATT survive the constraint choice? | Yes — all four constraints produce a negative average gap between roughly $-15$ and $-22$ packs |

| Does the *inferential* conclusion survive? | Yes — observed California falls below the lower 95% PI bound under every constraint by the late 1990s |

| What does a PI band cover? | The *synthetic counterfactual*, not observed California — "significance" means the observed series leaves the band |

| What is the most important `scpi` switch for this dataset? | `cointegrated.data = TRUE`, which adjusts the long-run variance for the I(1)-looking `cigsale` series |

| What is the design-time pitfall? | Adding covariates with NAs (`beer`, `age15to24`, `lnincome`) forces row deletions that bias the donor pool; the chapter uses outcome-only matching |

## Further reading

- @cattaneo2021prediction — the JASA paper that introduced the SCPI framework and proves the finite-sample coverage guarantee.

- @cattaneo2025scpi — the JSS software paper for the R and Python implementations; the chapter's argument defaults are taken from its replication script.

- @abadie2021using — JEL methodological review covering feasibility, data requirements, and assumptions for classical SCM (the baseline this chapter relaxes).

- @dunford2024tidysynth — the `tidysynth` package used in chapter 5, for direct A/B comparison with the simplex pipeline run here.